Charity PC Build #1

Donating a PC to the Learn Engineering YouTube Channel

Last Change: 19/Jul/2018, 1138hrs

System received in India!! Posted 21/Jun/2018, arrived approx. 07/Jul/2018.

Check out Sabin's review of the system LE received!

Whoami: Ian Mapleson <mapesdhs@yahoo.com>

Tel: +44 (0)131 476 0796

http://www.sgidepot.co.uk/sgi.html

http://www.sgidepot.co.uk/sgidepot/

Amount Raised So Far: 258 UKP

Introduction

I have built a PC as a charitable donation for a YouTube channel I

like, namely Learn Engineering (LE

for short). LE produces high quality educational videos which explain

complex engineering topics in a simple manner, with the intention of fostering wider

enthusiasm for engineering in general. The guys who create these videos work

for an engineering company in Pune, India

(so the shipping cost alone is a relevant factor, which in the event turned out to

be 130 UKP by courier).

One can support LE directly on

Patreon (I signed up; look for me at the end of their newer videos, I'm wearing an

eBid T-shirt), but I decided I wanted to help much more directly. The reason for this is

that I have long believed the field of engineering, along with related sciences & disciplines

such as materials science, is sorely undervalued in the modern world, often pushed aside

by other fields which garner greater publicity and funding, so I couldn't pass up the

chance to help out. After talking at length with Sabin Mathew at LE, I concluded that

even a moderate spend on a careful selection of parts (most used, some new) would produce

a far better system than they have at the moment. Of course it would be great to send

them something totally up to date like an X299 system or even a dual-XEON, but cost-wise

that's not viable.

One can support LE directly on

Patreon (I signed up; look for me at the end of their newer videos, I'm wearing an

eBid T-shirt), but I decided I wanted to help much more directly. The reason for this is

that I have long believed the field of engineering, along with related sciences & disciplines

such as materials science, is sorely undervalued in the modern world, often pushed aside

by other fields which garner greater publicity and funding, so I couldn't pass up the

chance to help out. After talking at length with Sabin Mathew at LE, I concluded that

even a moderate spend on a careful selection of parts (most used, some new) would produce

a far better system than they have at the moment. Of course it would be great to send

them something totally up to date like an X299 system or even a dual-XEON, but cost-wise

that's not viable.

The aim of this page is to appeal for help from others to assist in covering the cost of

what I'm building for LE, whether that's in the form of direct monetary donations, parts

I can use in the build itself, or absolutely anything at all which I can sell to help

fund the parts I want to buy. I now have all of the parts for the build (using a

motherboard based on the Intel X79 chipset), I just need to sort out the overclock config

and the OS setup; even so, the more help I receive with this, the less I'll have to eat noodles. :D

I have considerable experience building

PCs from used hardware (I do lots of benchmarking), offering as

it can a way to gain access to good performance for a greatly reduced budget target, the

key being to exploit the previous generation of high-end tech which used to be very

expensive. Naturally though for this build I will make no profit at all.

I originally wanted to send the system to LE during Sept. 2016, but alas family events meant

this was impossible; atm I plan on shipping the system before the end of Nov/2017. Many of the

parts I had already bought, intending to use them for systems I was going to sell, but I'm

using them for this donated build instead; this includes the motherboard, CPU, case, disks, one

of the SSDs, fans and PSU, though some of these were changed as the build progressed. Other

parts I've bought in more recent times. Note that I don't have any specific target as such as

to how much to raise, since I'm going to send them the system anyway, but clearly the more I

can raise the easier it will be on my own pockets. If I should end up with a surplus, I'll use

whatever's left over either to increase the spec, or for some future charity build instead (I'm

sure I'll help other channels aswell). Perhaps I could grow this idea over time into a regular

thing, who knows.

LE's current system is a generic

HP box with an i3 CPU, 4GB 1600MHz RAM, NVIDIA GT 610 2GB and 500GB mechanical C-drive.

They use Blender, GIMP and Camtasia to produce the engineering videos. Aside from the low-end

CPU, low RAM and lack of an SSD (essential for a modern, responsive PC these days), the GPU is

particularly weak. For those familiar with Blender, the GT 610 takes almost 31 minutes to

compute the Blender BMW test, while rendering the test scene on their i3 CPU takes more than 9

minutes (their system scores 347 for the Cinebench R15 CPU render test; the system I've built

for them scores 1389). Or to put it another way, the GT 610 is the second slowest GPU

listed on the Octane Render benchmark

page. :|

My goal was to send them something with at least double the CPU rendering performance (in the

event it was four times faster), but more importantly a system with far greater GPU speed,

especially for GPU accelerated rendering in Blender, achieved by installing more than one GPU

(the two GTX 980s I fitted are about two orders of magnitude faster than their existing GT

610). The system will also have a lot more RAM, SSDs, Enterprise SATA storage, provision for

easy system backup and some other extras I'll mention later.

In reality, the LE guy used the CPU in their HP system for rendering in Blender. The system

I sent them is seven times faster by utilising GPU rendering on the two GTX 980s.

Parts Donations To Use Or Sell

See below for the build I've done; I welcome any item at all which I can sell to help fund

this build (does not have to be computer related). So far one person has supplied some SGI RAM

(some of which I've already sold on forums.nekochan.net), another has sent me some old PC gfx

cards to sell, and of course I'm going to wade through my own stuff to see what I can sell off

(I have at least two dozen items to add to the for-sale list below). Please contact me by

email or phone if you can help (details above, or my full contact info page is here). I suspect this is probably the easiest way most people

may be able to assist with this build.

Direct Donations

You can use PayPal (feel free to send whatever you wish to my new paypal.me address), bank transfer, cheque, etc. Please

contact me by email or phone for details.

The Build

Here is a summary of the parts I've used for the PC, along with whether they're used or new,

and how much they cost me. After the table I'll explain each item in detail.

Item New/Used Cost (UKP)

Antec 302 Case Used 45

Akasa Soundproofing material for side panels New 15

Thermaltake Toughpower 1000W Modular PSU Used 55

ASUS P9X79 WS Motherboard (X79 chipset) Used 168

Intel 10-core XEON E5-2680 v2 (3.1GHz Turbo default) Used 165

Corsair H80 CPU Water Cooler + 2x NDS 120mm PWM Used 48

GSkill 8x4GB DDR3/2133MHz CL11 RipjawsZ RAM Used 142

Palit GTX 980 4GB Reference, main display Used 215

EVGA GTX 980 4GB Reference, extra CUDA Used 216

Samsung 840 Pro 256GB SSD (C-drive) Used 53

Micron C400 256GB (backup of C-drive) Used 46

OCZ Vertex4 128GB SSD (Paging/Scratch) Used 25

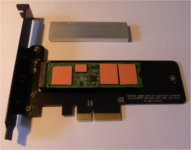

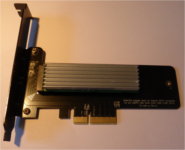

Samsung SM951 M.2 256GB PCIe NVMe SSD (scratch) New 80

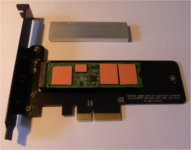

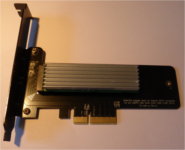

Akasa M.2 to PCIe adapter card with custom heatsink New 15

2x Seagate Enterprise ES.3 2TB SATA New 120

Seagate Enterprise ES.3 1TB SATA Used 29

Hitachi Deskstar 4TB SATA Used 68

Video-2-PC Analogue video USB capture kit New 35

Upper case intake fan (NDS 140mm PWM) New 10

5x Front/side/etc. fans (Corsair) New 25

Antec F8 8cm fan (chipset cooling) New 6

Startech 3.5"/5.25" front bay adapter New 4

Akasa 2.5" HotSwap Mobile Rack New 30

DVDRW drive New 15

BenQ GW2765HE 27" 2560x1440 IPS monitor New 210

Shipping cost to India via Landmark Global - 130

----------

1970 UKP

NB: If I was building all this using the latest equivalent technology from all-new parts, the cost would be well

over 3000 UKP. As it is, the above system should be very potent, and a huge improvement over their existing PC.

Note that when I first wrote this summary I suggested that the original 3930K would give a Cinebench R15 score of

around 1100, but fitting the XEON instead has improved on this greatly, resulting in a final score of 1386 (that's

faster than a stock Coffee Lake 8700K); see my benchmark page for comparisons, and

note the page should be viewed with Page Style set to None from the View menu in your browser.

PC Case (Antec 302)

I'm very familiar with Antec 300/302 cases, I've used them in numerous builds. The 302 has

an extra side panel grill behind the motherboard so one can fit a fan specifically for

cooling the underside of the motherboard. Since the PC will be used in a warm environment,

the added cooling will help. Despite liking the 302 case though, I've never liked Antec's

fans, so I replace them with better models, usually Nanoxia Deep Silence (NDS) or Corsair

fans (both are low-noise; NDS are almost as good as Nanoxia but are 50% cheaper). The

upper intake fan inparticular is replaced with an NDS 140mm PWM (works better, but less

noise). Note the 2nd picture above was taken before I cleaned the case.

Soundproofing (Akasa Paxmate)

Noise in a working environment is always annoying. I always fit Akasa noise reduction foam

to help minimise noise output from PCs I build (not taken a picture of the box yet, will

do that later).

Note that now Sabin has received the system, I can confirm it is running very quiet.

PSU (Thermaltake Toughpower 1000W Modular)

For many years now I've been using 2nd-hand Thermaltake Toughpower PSUs for PC builds,

they have been utterly reliable. As with all the used parts in this build, I completely

clean the PSU before installation, and often replace the fan aswell. Note that as

mentioned originally, I did indeed decide to use a 1kW PSU afterall, mainly because the

850W model didn't have the right connectors to supply two GPUs, even though the total max

power draw should be well within the capacity of an 850W. Sometimes required connectors

and cabling are more important. Also, the extra connectors mean the system could be

expanded in the future with an extra GPU of some kind, assuming the LE guys' office isn't

too warm.

Motherboard (ASUS P9X79 WS)

As a result of Gigabyte's beta BIOS bricking the UD5 board I was originally going to use, I have

upped the ante somewhat by replacing it with an ASUS P9X79 WS (I briefly replaced the

Gigabyte with an Asrock Z68 board, but the WS became available not long after as a result of

upgrading a friend's system). This is a top-end professional series board basically equivalent

to the Rampage IV Extreme, except with a slightly different feature set (though it has most of

the same overclocking options). This would originally have been an expensive board (over 300

UKP), but I obtained it a while ago from an ASUS refurb dealer for a good price (150 UKP). It

didn't come with any accessories (hence the low cost), I just had to source an I/O shield which

was easy enough (18 UKP, bought via ebay US)..

Earlier I had a 3930K on the board which, after overclocking, would have meant more heat to deal with,

but using a XEON means this is no longer an issue at all. Indeed the fans on the H80 will probably

never spin up much at all (under load, CPU temps don't go over 45C with a room ambient of 20C). Since

the LE guys' office is likely to be rather warm, this switch to a low-heat XEON is a wise move.

Note the original UD5 setup was to have included a VideoMate C500 SD PCI capture card, but this was

not compatible with the UD5, and the X79 board doesn't have any normal PCI slots, so the C500 is not

part of the build anymore. Ah well, the LE guys can always add a PCIe capture card later if they wish

(plenty of spare slots), eg. something from BlackMagic Design.

Here are a few pictures of the ASUS P9X79 WS board installed in the system (these were taken

before the SM951 was fitted and before the XEON upgrade):

Incidentally, to give you some idea how capable this board is, here's a picture of my CUDA research box, the same model mbd

(with a 3930K @ 4.7 and 64GB RAM) but fitted with 4x MSI GTX 580 3GB (faster than two Titans).

The P9X79 WS really can handle heavy loads no problem, so stability should be good with this setup,

which is of course important for running complex animation renders.

One final thing worth mentioning, I did update the BIOS on the board to a moddded version which

supports booting from PCIe NVMe devices such as the 960 Pro, SM961, etc. Thus, in the future, if

they ever want to, the LE guys could replace the 840 Pro C-drive with something better (there's a

spare slot on the board which would be suitable).

CPU (Intel 10-core XEON E5-2680 v2 @ 3.1GHz, with max 1-core Turbo of 3.6GHz)

After some battles trying to get a 4930K I obtained to work properly (gave up, returned for a refund;

see the build log for details), I changed tack entirely and after much research decided a XEON was a

better choice. Turns out availability of the 2600 v2 series is very good, and thus the cost quite low.

I was originally going to use an i7 3930K, with an overclocked setup to achieve better performance,

but with hindsight that would likely have introduced heat, noise and stability issues that could have

become problematic in the future (4930K would have been the same).

Naturally, using a XEON instead of an i7 does mean a lower base clock, but this isn't a gaming system

so single-core performance is far less relevant. Heat, noise, power, stability, etc. are higher

priorities, while trying to maximise threaded performance to boost rendering and video encoding.

Also, since the 2680 v2 is an IvyBridge-EP part, it does provide PCIe 3.0 for the GPUs and PCIe SSD,

which is good. Here is the Intel Ark

info for the 2680 v2.

Note that even though a simple air cooler like a TRUE would be more than enough to cool this XEON,

I'm still fitting the H80 AIO because this helps prevent transit damage, ie. an air cooler can

wobble about too much which risks damaging the motherboard, but a water cooler is held firmly in

place.

I did of course investigate using Ryzen, but the platform is not suitable (not enough RAM or PCIe

slots, too expensive overall). If money was no object though then I'd be doing a Threadripper build

for sure. :D But then we're talking 800 UKP just for the CPU. Besides, it's important to remember

that the primary bottleneck for what the LE guys do is CUDA-based rendering in Blender (and video

editing/encoding), and in that regard there is plenty of scope for upgrading the system, ie. just

replace the GPUs with something better in the future.

CPU Cooler (Corsair H80 with Nanoxia Deep Silence 120mm PWM Fans)

I always use water coolers for my builds if I can, they are so much more effective than large

air coolers, and make it much easier to manage the space inside a case. I was lucky to win a

used H80 for a good price, which came with one Noctua NF-P12 120mm fan. Since this build was

updated though to use an X79 mbd, which has extensive PWM fan headers, I decided it made more

sense to use Nanoxia Deep Silence (NDS) PWM fans instead (the Noctua NF-P12 is only 3pin).

Most importantly, as mentioned already, using a water cooler ensures safe transport.

Chipset Cooling (Antec F8 with custom mounting)

Using an AIO water cooler does have one down side vs. an air cooler, namely the latter naturally

blows some air across the mbd chipset, whereas this is not the case with an AIO WC. Thus, it is

best to fit an extra fan to deliberately blow some air over the mbd chipset. An Antec F8 is

ideal for this. Sometimes a case design might mean the top fan already provides enough air, but

chipset heatsinks are such that the air may not be able to flow where it's needed. Thus, an F8

can force some of the air coming down into the case to blow onto the chipset heatsink next to

the main ATX connector, and also onto the RAM modules in that area.

How to mount the fan though? Turns out that just infront of the mbd are two very conveniently

positioned holes in the case chassis, exactly the width apart of the holes on one side of an 8cm

fan. I realised I could make some kind of supporting mechanism to hold the fan in position above

the RAM modules. Thus, I employed a metal mbd support rod removed from an SGI Octane (square

cross section), cut it to length in two pieces, modified the fan a bit at the corners (square

indentations), attached the rods using superglue, then fitted plastic pieces to help give extra

strength and hold them in place, again using superglue. Lastly, I attached some pieces of foam

to the underside edge of the fan edges so that the plastic would not be resting directly on

whatever is below (in this case, the RAM modules).

Re the 3rd and 4th pics above, in case you're wondering why the fan position looks slightly skewed,

it's because I had to hold the metal rods in place by hand while the superglue was setting and I

didn't hold them perfectly straight. :}

Memory (GSkill RipjawsZ 32GB [8x4GB] DDR3/2133, running at 1866MHz)

As a result of changing the build to use an X79 mbd, the RAM is now different aswell, namely a

GSkill RipjawsZ 32GB (8x4GB) DDR3/2133 kit. The good thing about GSkill kits is the warranty is

transferable, so if the LE guys ever have a problem then they can get a replacement kit, or send

it back to me and I can deal with it. Picture coming soon, but see above for pics showing the RAM

installed on the mbd. The switch to a XEON CPU did sadly mean having to reduce the RAM clock down

to 1866MHz, but it doesn't seem to have affected performance, and I can likely tighten the

existing 10/11/11/28/2T timings to compensate (not done this yet).

Primary GPU (Palit GTX 980 4GB Reference)

I originally considered fitting two GTX 580s, but the intention for the primary GPU was to

have a card that has good standard 3D/viewport speed. The GTX 580 is great for CUDA (due to

various complex reasons I won't go into here), but newer cards are much faster for normal non-

CUDA 3D tasks, which of course includes games, but certainly working with 3D models in Blender

aswell. Originally I listed a GTX 970 as being the card I wanted to obtain, and indeed a GTX

970 is about 2X faster than a 580 for normal 3D, so a 970 was a sensible minimum target.

However, further price drops in typical used GTX 980s meant I decided to try and obtain a GTX

980 instead (it's not much more, and the extra performance is fairly significant). Also, I

don't know if the 970's split memory design would hinder the way in which Blender works if the

available 4GB RAM was being almost entirely used, but I figure it's best to be certain (I know

the design has virtually no effect on gaming, but pro tasks often behave differently).

Thus, I obtained a GTX 980. I was also successful in obtaining a second GTX 980 for the extra

CUDA card, for almost the same cost as the primary 980 (details below).

Note that reference cards are preferred here because such cards vent most of their waste heat

directly out the back of the case, so the air inside the case going through the CPU cooler is

unaffected. Non-reference 980s with aftermarket coolers are certainly faster (eg. the EVGA in

my gaming PC runs at 1266MHz, vs. the typical 1127MHz of a reference edition), but they dump

too much of their waste heat (in some cases all of it) inside the case, which would affect the

CPU cooling. Managing temperatures and cooling in this build is very important, because the

office environment in India where it will be used can get quite warm.

Of course it would be great to fit something even more powerful like a GTX 1080 or somesuch

instead, but one must be realistic. However, at least my original speculation about 2nd-hand

price drops making 980s affordable turned out to be correct, though it's possible the supply

is starting to dry up now; I noticed that people seemed if anything to be bidding slightly

more for reference cooler 980s compared to a few weeks ago, so lack of supply may be forcing

up perceived value even if newer products ought to be making used 980s cheaper (the 2nd-hand

market is still subject to the laws of supply & demand). Update: not long after writing this

text, someone won a reference 980 auction on eBay for the crazy sum of 275 UKP, which is

almost as much as people had been bidding on the lower side for 980 Ti cards. More recently,

the Etherium mining craze has pushed up all used GPU prices, so I was lucky to get the two

980s when I did.

Note I am using NVIDIA cards because the drivers are more reliable, and the CUDA acceleration

in Blender is more complete and faster than OpenCL. The power consumption is also better.

AMD has come a long way with its compute performance in recent years, but it's not quite there

yet, and driver reliability is still an issue.

Secondary CUDA GPU (EVGA GTX 980 4GB Reference)

Happy to report I was able to win a second GTX 980 auction for 216 UKP, an almost identical cost

to the primary GPU. This does increase the cost somewhat, but it means much better CUDA

rendering performance overall.

Rendering performance in Blender is very important for the work LE does. I originally began

this project with two GTX 580s in mind, because they're so strong for CUDA but are reasonably

cheap, but over time I decided that something far more power efficient and cooler would be

better given the warm environment where the PC will be used. And at least having two 980s

means all aspects of processing will have the same higher 4GB VRAM limit (the 580s I'd

originally planned on using only have 3GB). Just for reference though, my own CUDA research

machine (which is faster than two Titan Blacks) has four GTX 580 3GB cards. A 580 is

faster than all the 600 series cards for CUDA, and the only 700 series cards which beat it are

the 780 Ti and Titan. By comparison, a good GTX 980 is about 10% slower than two 580s

combined, the latter being quicker than a Titan. However, depsite the low cost of 580s and

potent performance, they're best used where heat issues are less of a concern, and the 580

does use quite a lot of power. Thus, I'm glad I will be able to fit two 980s, despite the

higher initial cost (power consumption cannot be ignored here).

Two 980s will provide a huge speed increase for Blender rendering over LE's existing system.

The relative performance of different GPU combinations can be compared using the OctaneBench, Arion and Blender BMW benchmarks. Overall, the two

980s combined provide about the same CUDA rendering performance as a single reference 1080 Ti

for a much lower cost.

Note that the LE guys don't use the GPU in their existing HP system because it's too slow.

They use the CPU instead. The system I supplied though has allowed them to do renders at

least seven times faster via GPU rendering instead, though optimising the render settings is

critical for best performance (mainly, choosing sensible tile sizes, larger being generally

better for GPU renderin, and also trying to avoid fractional tiles being processed).

C-Drive SSD (Samsung 840 Pro 256GB)

I won a used 840 Pro 256GB for a decent price. Of course it would be great to use a 500GB/512GB

model, but that would cost much more, and make the backup SSD more expensive too. Besides, the

idea of this build is to encourage data to be stored where it makes the most sense, so the C-

drive does not have to be large.

Backup C-Drive SSD (Micron C400 256GB)

Reliable system/data backup is very important for any PC user. In this case the idea is to allow

the LE guys to do a full C-drive clone backup to a 2nd SSD without having to power cycle the PC,

via the use of a 2-bay trayless hotswap unit. The backup SSD is a Micron C400 256GB.

Windows Paging and Scratch Area SSD (OCZ Vertex4 128GB)

Windows always uses virtual memory in the form of a large paging file. Normally this uses up a

lot of space on the C-drive, especially in systems with a lot of RAM. Thus, I like to fit a

separate SSD to hold the paging file, the partition for which should be 1.5X the main RAM

capacity (ie. in this case, 48GB for a system with 32GB RAM). This frees up the space on the

C-drive and reduces the wear on the C-drive aswell. An OCZ Vertex4 128GB is ideal for this,

given its high IOPS rating.

The unused space on the paging file SSD can then be used as a general scratch/temporary working

area for everyday use, eg. a destination for reliable video capture, output from a render or

video conversion, etc.

Video-editing and Render Scratch SSD (Samsung SM951 256GB)

I'm going to include a Samsung SM951 256GB PCIe NVMe SSD, held in an Akasa PCIe adapter. The LE

guys can use this as the main working area for whatever video or animation data they are dealing

with, eliminating any I/O bottlenecks. Once a piece of work is complete, the final results can be

moved to one of the 2TB SATA drives for longer term storage.

I hadn't originally planned on adding this, but after the Gigabyte BIOS debacle I decided to

beef up the spec a bit just as a sort of finger in the eye of the forces of entropy. :D

In the pics above, a heatsink is included to prevent thermal throttling (useful when copying large

files); I moved the product label to the back of the adapter card.

Here are some benchmark screenshots from AS-SSD, ATTO and CDM using an SM951 on an ASUS Rampage IV

Extreme (widen your browser window if necessary), different mbd but the same X79 chipset and

Samsung 2.2 drivers, so the results should be basically the same:

I will install the SM951 after the CPU/RAM configuration and testing is completed.

Enterprise SATA Storage (Seagate 2TB/1TB ES.3)

Consumer mechanical drives (or rust spinners as I call them) are cheap, but this is for good

reason, ie. lower reliability. Thus, I constantly try to obtain unused or barely used Enterprise

SATA drives, which are fast but also a lot more reliable, and in many cases often still have

valid end user warranties. The Seagate ES.3 series is perfect for this role, and I was able to

obtain a couple of new drives for very good prices (half what they normally cost new).

The 1TB drive is for general data backup, eg. normal snapshot file images of the C-Drive,

important user files, etc.

General SATA Storage (Hitachi Deskstar 4TB SATA)

I had an opportunity to bag a couple of Hitachi drives for a good price, so I decided what the heck!

This should make the LE guys' ability to manage their data a bit more flexible, eg. they could use

the two Seagate 2TB disks for their main storage, use the Hitachi for backup. Alas no picture, I

forgot.

Video-2-PC DIY Analogue-SD-to-USB Video Capture Kit (seller link)

I would have preferred to include an HD capture kit, but I just couldn't find a product that was

reportedly reliable. By contrast, this particular device works well, and the supplied software

is genuinely easy to use (very rare these days). Thus, if the LE guys want to incorporate any kind

of real life video into their youtube videos, then they can at least do that in SD.

Upper Case Intake Fan (NDS 140mm PWM)

The default Antec intake fan is not very good, so I replace it with something better. The

NDS 140mm PWM costs half that of a Noctua but works almost as well, ie. good performance

and low noise.

5x Front/Side/etc. Fans (Corsair/NDS)

Some of these fans have come from Corsair H80/H100 water cooling kits where I fitted NDS

fans instead, so they're basically spare. Some models of Corsair fan can be rather loud,

but I have several which are much better. Properly configured so that fans only spin up

when temperature conditions demand it, the system should operate with optimal noise levels.

The fan on the far side of the case is an NDS 120mm, while the two front fans and main

side panel fan are all Corsair.

Startech 3.5"/5.25" Front Bay Adapter

This is used to hold the next item within a 5.25" drive bay.

Akasa 2.5" HotSwap Mobile Rack

Blimey, I forgot to update this section! As a result of adding an extra 4TB drive

late in the day, there was no longer enough spare SATA ports to connect to two

hot-swap bays, so I replaced the 2-bay Startech unit with a single-bay Akasa unit.

I'll add pictures later.

This fits into a single 5.25" front bay and provides a hot-swap 2.5" trayless

bay, ideal for C-drive backup or other temporary SATA device access.

DVDRW Drive

I would fit a BDRW (bluray burner) but I don't think they need it and the cost is much

higher. However, this might change later if I can find a decent used BDRW unit.

BenQ GW2765HE 27" QHD LED IPS Monitor (seller link)

Something I had planned from the very beginning was to provide the LE guys with a much

better monitor, a type with good image quaity and a resolution high enough so that they

could work with full HD video without having to use proxies. This of course meant an IPS

panel at 2560x1440. I did initially obtain a Del U2711 off ebay for this purpose, for

150 UKP, but alas the unit had a fault, and time constraints meant I was unable to deal

with the issue (I eventually gave it to a friend on the off chance he could fix it and

use it for himself). In the end I concluded it made more sense just to buy a completely

new unit, since pricing had come down to reasonable levels, ie. buying a used model wasn't

really saving that much.

Naturally, safe packaging for a monitor is essential, especially since couriers generally

won't provide damage cover for monitors (only total loss). This BenQ fit the bill nicely,

so I hope the LE guys received it ok. I packed the original monitorbox inside an outerbox,

so it should be well protected.

Misc Internal Cables

PWM fan splitter and extension cables are needed in order to connect various fans to the

motherboard, while maintaining a tidy layout. An Auxiliary Motherboard power extension

cable is used for similar reasons.

Pictures

In addition to the various pics above, here are some more showing the installed storage devices,

PSU connections (room for future expansion!), rear chassis cabling, etc., and how the side

panels appear with the side fans connected before the panels are closed into position.

Comments, questions, suggestions, and of course donations/parts, all most welcome! 8)

Ian.

PS. For those who may not know, I am based in Edinburgh, Scotland.

-------------------

SGI Guru

mapesdhs@yahoo.com

+44 (0)131 476 0796

+44 (0)7434 635 121

Build Change Log

16/Jul/2018:

Delighted to report both boxes (system and monitor) were received ok, all intact,

and Sabin has the system up and running. 8)

My main worry was potential damage during shipping, but in the event (due to my nuke-proof packaging) the only minor issue was

a single fan cable had come away from its fan-splitter connector, an easy enough

fix. Sabin has uploaded a video about the system:

https://www.youtube.com/watch?v=g2WiJNEZLNY

Note that Sabin didn't know I was sending him and his colleagues a monitor aswell,

that was a last minute surprise. :D

Btw, one minor point about the video: the BMW render test Sabin mentions is

comparing CPU-based rendering speed on their existing HP box (details at the top

of this page) vs. GPU-based rendering speed on the system I sent. The LE guys

don't use the GPU in their HP box for rendering as it's way too slow (the GT 610

is the 2nd slowest card listed on the Octane

Benchmark page). For the curious, the XEON I put in the system would complete

the BMW render in about 1m 53s, though it's also worth noting that running the

test on the GPUs (2x GTX 980) can probably be speeded up further via optimised

settings (small tile sizes are best for CPU rendering, but larger tile sizes are

best for GPU rendering, eg. for the BMW test I use a tile size which splits the

image into a 4x3 grid).

Anyway, I am delighted the system was received ok, There were of course a few

things that fell by the wayside during the process of finalising all this. I had

meant to include a pair of Logitech X140 speakers, but completely forgot when it

came time to pack everything up, though with hindsight there's no way the speakers

could have fitted in either of the boxes. Also, reading some of the comments on

Sabin's video, I realise I never thought at all about a microphone for audio

recording. I included a USB SD video capture dongle, which works well, but

including a mic didn't occur to me. Audio isn't my field, I wouldn't know where to

begin with respect to what is sensible for such tasks. However, I do have some

movie company contacts, so I'll ask around, see if someone has an older (but still

decent) studio mic they could donate.

Apart from that, the only other thing was that a few of the product packing boxes

could not be included as there wasn't space in the two shipping boxes, namely

those for the H80 cooler, some of the NDS fans and the Akasa memory card reader

unit. Ah well, no matter. I normally do like to include all original product boxes

if I can so that the recipient can more easily sell on items if/when they

eventually decide to upgrade, etc.

So, this project is finally complete. It took a lot longer than originally anticipated,

mainly due to repeated family events that sidetracked my time on multiple occasions,

especially several family bereavements. Perhaps that's why I ended up sending a

much better system than I'd originally intended, a poke in the eye of Murphy and

his insidious law. :D

Best wishes all, and thanks for your support! I look forward to seeing what the LE

guys can do in the future. In the meantime, I do have a great many more items to

add to the for-sale section on this page (again, time has prevented me from

writing them up), so I'll get back to listing them towards the end of this month;

still hoping to cover a tad more of the cost if I can. :D My thanks to everyone

who's contributed so far!!

Cheers! :)

Ian.

04/Jul/2018:

The tracking info shows both boxes have reached the Customs dept. in India, Sabin is now trying to contact the

relevant agency to arrange clearance. Almost there! 8)

29/Jun/2018:

My heavens, how quickly time flies! Apologies for the lateness of this update. Two further

family bereavements after new year set back my plans for this project by quite a long while, plus some

other family matters I had to deal with (helping an eldery relative). In the event, I wasn't

able to get back to sorting out the system until May, then it took a while longer to find the right timing

to pack and post it, which by now also included a monitor. Finally during the 3rd week of June I was

able to get everything ready, so I posted the system and monitor on 21/Jun. 8) As I type this, the

LE guys have not yet received it (probably being slowly processed through Indian Customs, which could

take a while), but I will update this page as new info appears on the tracking site.

Note that the POST issue mentioned previously stopped occuring, and the compatibility issue I referred

concerned the Dell monitor I initially obtained which turned out to be faulty (it would go into power

saving and not recover), so as stated above I solved the monitor issue by virtue of just buying something

new (a BenQ). The system works fine with the BenQ, and the desktop, etc. has all been setup ready to use.

The irony of this project now is that I've ended up wtih a significant collection of items to sell off

to help pay for it, but I never had the time to get them listed. :D It did turn out to be a rather

pricey venture I suppose, but I'm sure well worthwhile in terms of what the system will enable the LE

guys to do with their work,

Note that it is of course easy to look at the above spec and point out the existence of CPUs such as Ryzen, but

the modern high cost of DDR4 RAM and the huge spike in GPU pricing has meant that the system I put together

continues to hold its own in terms of value and price/performance. Indeed, the CPU I fitted (the 10-core XEON) now

tops my own benchmark results page for threaded

performance. The later generic system the LE guys bought might be quicker for single threaded tasks, but for

Blender rendering, video processing, etc., the XEON is a good choice and was well priced. Indeed, I upgraded my

brother's gaming PC with the same CPU. :D What I particularly like is that, since it is not an overclocked system

now, it runs nice and quiet, which certainly would not have been the case with an overclocked 3930K, and of course

it means the power consumption is more sensible aswell, which means it'll be more efficient (and cope better with

a warm working environment). An additional benefit of the XEON is that the model in question supports native PCIe

3.0, so the GTX 980s can run at full speed, which is good.

So, that's the project complete! The system is away. 8) I'll add some pictures later showing the final

setup, and the stages of getting the PC and monitor packed up for posting by courier.

My thanks to all those who helped with this, donating parts to sell or indeed cash. I'll keep going with the

items-for-sale thing though as I'm nowhere near covering the cost of the system at the moment. :D I have many more

items to add to the list, but I won't be able to do that until late July as I'm away at the moment dealing with

family matters.

Btw, I only noticed today that I forgot to upload this page after the last update in Dec/2017, sorry about that! :}

22/Dec/2017:

My plan to send the system before the xmas holidays went slightly

askew. There was one more major item I wanted to include, which I did

obtain, but there's a compatibility issue I need to resolve before it's

ready to go (I don't want to mention it here so that it'll be a

surprise). Meanwhile, the system itself has developed an odd habit of

failing POST with a B6 code, which refers to Option ROM initialisation;

not sure what's causing this, but I need to resolve it before posting.

Could be something simple like one of the hard disks needs a firmware

update, or perhaps the Marvell SATA3 controller needs updating. Either

way, I'll get it sorted after xmas. Apart from this, the system is

basically ready.

12/Oct/2017 (XEON Strikes Back!):

Getting the faulty 4930K replaced was a no-go, Intel couldn't help (the warranty expired back in

March), but in the event I was at least able to return the CPU for a refund.

However, after some further research I started thinking about XEON options. It's a bit hard to pin

down precise performance details as most reviews tend to cover dual-socket boards, whereas I was

looking for single-socket data, but then I found this very interesting YT video about comparing a

SandyBridge-EP XEON E5-2680 vs. the i9 7900X. The results convinced me a XEON would be a good

alternative, all the more so because I quickly discovered the later 2680 v2 (stock

image) was readily available at low prices, and it has two more cores plus a higher base clock. Even

better, the v2 edition is based on IvyBridge-EP, thus it does support PCIe 3.0 and has the same IPC as

IvyBridge CPUs like the 4930K.

However, after some further research I started thinking about XEON options. It's a bit hard to pin

down precise performance details as most reviews tend to cover dual-socket boards, whereas I was

looking for single-socket data, but then I found this very interesting YT video about comparing a

SandyBridge-EP XEON E5-2680 vs. the i9 7900X. The results convinced me a XEON would be a good

alternative, all the more so because I quickly discovered the later 2680 v2 (stock

image) was readily available at low prices, and it has two more cores plus a higher base clock. Even

better, the v2 edition is based on IvyBridge-EP, thus it does support PCIe 3.0 and has the same IPC as

IvyBridge CPUs like the 4930K.

The benchmark data I found suggested that a 2680 v2 would, for threaded tasks like rendering and video

encoding, actually be faster than a 3930K @ 4.8GHz (a level of overclock that I had no hope of

achieving back when this build still had a 3930K). Thus, compared to my target CB R15 performance

level of 1100 I thought was possible with a 3930K @ 4.5GHz, using a 2680 v2 instead has resulted in a

score of 1386, some 26% faster (and that's without optimised memory timings), which btw is faster than

the latest Coffee Lake 8700K (it

scores 1364 ). Another data point, the 2680 v2 scores 15.45 for CB 11.5, compared to 13.8 for a 3930K @ 4.8GHz, or 15.37 for the 8700K.

As it happens I'm working on several X79 builds at the moment, all of which are going to be used

for various types of content creation, so I decided to change them all to the 2680 v2 (previously

they had a mix of 3930K and 3970X CPUs, which I can sell off to help cover the cost of the XEON

replacements); buying several XEONs reduced the unit price considerably, from 225 each down to

165.

Overall I am pleased with this final CPU choice. Since XEONs are normally not overclocked (there

is an exception, the 1680 v2 which has an unlocked multiplier, but it's very hard to find and far

too expensive), it means the system will be much more stable, the CPU load temperatures are far

lower, hence less heat, and the fans can run at a low speed, so less noise and better long term

reliability of both the AIO and the fans. It's wins all round, 8)

Thus, what's left to do now is to setup the AI Suite II software to more propely control all the

various fan speeds based on thermals, optimise the memory timings (I'll probably end up with

something like 8/9/9/24/2T), and install the SM951. Then that's it pretty much done. I was going

to say I could post it before the end of October, but I am away at that time visiting family for a

birthday, so more likely I'll post the system in early November. Hurrah! 8)

Before posting the system, I'll update this page with final performance info, etc. The data will be

added to my main results page, so keep an

eye out.

25/Sep/2017:

Family matters back in Aug held things up again. Anyway, back to it! Main thing is I decided

at the end of August, what the heck, I may as well try and get hold of a 4930K as a final

upgrade perk to the spec, give it proper PCIe 3.0, so the GTX 980s will run full speed and

so will the PCIe SSD. I did obtain a 4930K but alas it's not working properly, fails to

correctly recognise memory bank A (checked with other mbds and RAM to be sure). Alas the

chip is out of warranty (ended in March, rats!), so atm I'm asking around to see if anyone

can help with a replacement, ya never know. Here are a couple of BIOS pics:

The first image shows the system only recognising 24GB out of the 32GB installed RAM, the

second showing the "Abnormal" DIMM slots (for those who've seen this issue before, in

Windows the Properies panel of the PC will show the lower amount of RAM, while CPU-Z can see

all the RAM). The CPU behaves the same way on some other X79 boards I checked with, and

different RAM, so it's definitely the chip at fault. I contacted the original retail soure,

they reckon the chip's IMC is bad. Anyway, hopefully I can find a replacement. Would be nice

if the system was able to run the PCIe slots at 3.0 speed. I'll update later this week with

more details.

03/Aug/2017:

Added the pics of the SM951, and benchmark results for the SSD tested on a different

mbd using the same chipset.

15/Jul/2017:

Alas more delays due to family matters, but anyway, some major changes! 8)

As is probably evident from the previous update, I was rather frustrated at the

Gigabyte debacle, re their beta BIOS trashing the original board. I did replace

it with an Asrcck board and that worked ok, but really the Asrock Z68 Extreme7

is a bit OTT for a system like this (the Extreme7 is for more high-end gamers),

and I'd rather keep the Asrock for some of the SATA testing I want to do.

A couple of weeks ago I had cause to upgrade a friend's X79 system, changing

the existing ASUS P9X79 WS to the revised P9X79-E WS, and swapping the 3930K

CPU for a 4930K. The source mbd, a system I built a while ago for CUDA testing,

had 32GB RAM (8x4GB) which was moved onto the older board. Afterwards, I got

thinking about the P9X79 WS and decided, what the heck, and a two-fingers up

to the agents of mbd entropy, I'll fit it into the LE machine. I did have to follow

the proper Microsoft procedure for reregistering the Win7 license, but that

worked ok. Updating the drivers on the 840 Pro was fairly easy aswell. The system

is now basically done hardware-wise, I just need to sort out the overclock.

Thus, the LE machine is now a 6-core 3930K system with 32GB 2133MHz RAM, on

a far more professional board. This should mean better long term reliability.

The board did not come with its original box, so no fancy pics of that, but I'll try

and locate an original manual if I can. All the other components

are the same, except there is now an additional 8cm Antec F8 fan for cooling the

chipset near the main ATX power connector (I constructed a custom mounting so that

it could be secured to two holes in the chassis frame; thin foam pads ensure it sits

firmly on the DIMMs, but with a small gap to aid air flow).

One final change, just to poke an extra finger in the eyes of the mbd

destruction gremlins: I've fitted a Samsung SM951 256GB PCIe NVMe SSD to act as

the primary location for holding video data during editing. It may help with

renders aswell. Either way, this will certainly remove any I/O bottlenecks from what

the LE guys want to do. The SM951 does about 2GB/sec.

With the extra PCIe slots available on the P9X79 WS, I did consider sourcing a

third GTX 980. However, given the thermal environment in which this system will be

used, I figured that would be unwise. Thus, in the future, if the LE guys want

faster CUDA performance, it makes more sense to just replace the 980s entirely with

something better.

I'll add pictures of the changed/extra components over the new few days.

27/May/2017:

Sorry again for the lack of updates. A family bereavement back in mid-Feb meant

I had to put this project aside for a while.

Over a period of weeks Gigabyte eventually asked me to try a beta BIOS they

had for the mbd, but the BIOS broke the board, forcing it into an even

worse on/off power cycle (even without the C500 fitted). I was dealing with

the support people in Germany. They asked me to contact Gigabyte UK and

request an RMA, explaining the circumstances. However, Gigabyte UK were not

forthcoming, stating that the board was out of warranty, etc., basically

ignoring the fact that their own staff had wrecked the board. They said

they could do a BIOS reflash, but would charge a fee, shipping, and there

wouldn't be any guarantee the board would be functional afterwards. Thus,

after some mulling of options, I decided to forget the Gigabyte board

entirely (I've changed the build details above, will add some pics of the

mbd fitted inside the system next week).

I mentioned in the previous update that I had tested the C500 on an Asrock

board and it worked ok. Hence, I have switched to using the Asrock board

instead, though alas with two 980s fitted there is no room for the C500

because the 2nd 980 blocks the PCI slot. Thus, the C500 will no longer be a

part of this build (info for it above has been removed). It was a nice idea

to be able to include basic SD video capture, but I guess the LE guys can

always obtain a PCIe capture card in the future if they wish (I didn't

originally go for a PCIe card because all the reviews I read of such cards

were distinctly unfavourable). Alternatively, they could fit a third GPU

in the other main PCIe slot for additional CUDA.

So, the mbd in the system is now an Asrock Z68 Extreme7. This is a much

higher end Z68 board than the Gigabyte model, but I suppose it means the

overclock setup should work better. One advantage of the Asrock board is

that it does have more SATA ports, hence the PCIe SATA3 option card is no

longer required (details above removed).

Since the C500 is no longer a factor, the main remaining task is to sort

out the overclock configuration, which I will do during the next two weeks,

conducting extensive testing to ensure stability.

14/Feb/2016:

Apologies for the absence of updates, alas family matters took up much of

my time after the xmas break, and such matters are still ongoing. However,

at the moment I'm still in discussions with Gigabyte, hoping they will

supply a custom BIOS to support the C500 PCI card, so things are a tad on

hold anyway.

Gigabyte asked if I could test a different Z68 board and indeed I did so,

using an Asrock Z68 Extreme7 (it has one PCI slot), on which the C500 card

worked perfectly ok. Hence, I know there is no general incompatibility

between the C500 and Z68 chipsets, something Gigabyte initially suggested

might be an issue. Instead, it is far more likely to be a BIOS support

issue specific to the Gigabyte board, as described by the user absic

on the

Gigabyte Forum.

Thus, it boils down to whether Gigabyte are willing to supply a custom BIOS

to support the C500 card. If they do, then great, the system would then

basically be ready, I just need to finalise the overclock, the OS setup

(software for the C500, disk config, backup), etc. If however Gigabyte say

they can't supply a custom BIOS, then I'll have to use the Asrock board

instead; I'm not sure atm what this would mean for the spec I've so far

been going with, since the Asrock board has a very different PCIe slot

layout, ie. it may not be able to utillise two GPUs without blocking the

PCI slot. It would certainly mean a further delay re having to swap out the

parts, and of course redoing the driver setup. Anyway, I'll see what

happens. Hopefully I'll hear back from Gigabyte by the end of this week.

Meanwhile, I sold a couple of items from the for-sale list! Yay! My thanks

to Mr. Andrew Heath. 8)

12/Dec/2016:

Run into a bit of a hitch with the build atm. If I try to fit

the C500 PCI capture card into either of the PCI slots, when the

power button is pressed there is a brief moment of activity

before the system immediately shuts off again. No idea why,

still looking into it. I've checked the C500 PCI card with a

different system (old P55 setup) and it works ok, so it's not

the C500 itself. Maybe something in the BIOS, or a mbd short

somewhere. Feel free to email me if you've any thoughts!

Apart from the above though, the rest of the hardware is in

place, including the PCIe SATA3 card. Not yet sorted out the CPU

overclock, but the system is running ok. Need to read up on the

Gigabyte BIOS setup, I'm too used to doing this with ASUS

boards, though I should be able to more or less copy the

settings I use for ASUS M4E boards with a 2700K.

06/Dec/2016:

I am in the process of installing Win7/Pro/64bit at the moment. The

front and side fans are installed. Next up is fitting the sound

insulation to the side panels.

03/Dec/2016:

I won a second GTX 980 Reference card! See ebay item 182372137418.

04/Nov/2016:

Happy to report I've obtained a GTX 980 with Reference cooler. 8)

See eBay item 282221326067.

11/Oct/2016:

Alas some critical family events prevented me from working on

this build during most of September and continues to be an issue

at the moment into October (as I type this, I'm away helping an

elderly relative with care issues). However, I should be able to

get stuck into it again during the second half of October.

On the positive side, the delay has meant that in the meantime

the typical selling prices of used GTX 980s has come down

further, so I've decided to aim for a 980 as the primary GPU

instead of a 970 (it does mean the overall cost is a bit higher,

but the extra performance is worth it). In a similar manner,

although I'll proceed on the assumption that the seondary GPU

will be a GTX 580, if I can sort out a used 780 Ti instead (or a

2nd 980) then I will (ie. selling two 580s should cover the

cost). The latter is 2X faster than a 580, and uses less power,

so it's a worthy change if I can do it. The 580 is still a very

good card for CUDA (two of them beat a Titan) and in some cases

are a better choice for certain tasks than the non-Titan 700s

because the 580 is strong for 64bit CUDA (eg. pro audio

processing exploits FP64). However, this isn't certain yet, but

there is time. I've listed the 580s for-sale below, and I'll post

adverts for them next week.

15/Sep/2016:

I'm getting close to bagging the primary GPU! A Palit Jetstream 970 sold for 132 on eBay

today, which is the cheapest 970 I've seen so far; I didn't bid btw because it's not a

model which has an external exhaust cooler. As more people upgrade to newer cards, the

supply of 970s is rising rapidly, so I'm sure it won't be long before I can win a 970 with

a reference cooler for a sensible sum (strangely, people seem to bid more for models with

reference coolers, no idea why given they normally have lower core clocks).

Meanwhile, having originally obtained a Micron C400 256GB SSD for the backup of the C-

drive, I unexpectedly managed to win a second 840 Pro 256GB for an even lower price than

the first, so to heck with the Micron, the backup unit will also be an 840 Pro! It's

obviously best to use identical models if possible, so I'm pleased with this. The extra

840 Pro did cost 5 more than the Micron, but it's worth it.

05/Sep/2016:

I was away for most of August, now off for a short 4-day break, back again as normal on Sep. 12th.

In the meantime though I was able to secure the SSDs for the C-drive and backup drive, pictures

of which I've added above.

30/Jul/2016:

Minor update: I'm away at the moment dealing with a family matter. In the meantime, I've

added the pictures above.

20/Jul/2016:

I have put most of the system together, using one of my own GTX 980s as a temporary GPU, and a

temporary 120GB SSD just for testing (the trays to hold the SSDs are not yet fitted, I won't do

that until the final SSDs have been obtained).

Because the GTX 580 does need one 8pin power connector, I decided the 850W PSU I was

originally going to use was not suitable. One could use molex splitter adapters to feed a

PCIe power link, but I'd rather not do that. Better to have proper PCIe power feeds if

possible. The 1kW version of the Toughpower has enough PCIe ports to supply at least three

GPUs that each need two power inputs, so there is also scope for future expansion.

The GTX 580 itself though is not yet fitted. This is best left until after the initial

OS install is finished (of course later I switched the plan to 980s, but despite this I

decided to stick with the better PSU).

I've not yet fitted the PCIe x1 SATA3 card either. I'll do this once all the main Windows

drivers and updates have been installed.

The front fans are not attached atm, this comes next.

Items For Sale

Here are the items I have available for sale, all proceeds go to funding this project (I

have lots more items to add, will do this next week):

- RamSan 440 512GB DRAM/Flash Enterprise Multi-Port 4Gbit FC Storage Unit: 300 UKP (4.5GB/sec, 600,000 IOPS sustained. See detailed info below!!)

- EVGA GTX 580 3GB PCIe graphics card (772MHz core, 512bit bus, bare card): 55 UKP (two available! Combined, they are faster than a Titan for CUDA)

- 2x Palit GTX 570 1280MB PCIe graphics card (750MHz core, 384bit bus, bare card): 35 UKP each [Card] [Product Label] (good condition, P/N NE5X570S10DA-1101F)

- EVGA GTX 460 1GB SSC FTW PCIe graphics card (867MHz core, 192bit bus, boxed/complete): 30 UKP [Main Box] [The Card] [Product Label] [Specs] (excellent condition!)

- i7 930 CPU (2.8GHz, 3.06GHz Turbo, SLBKP): 20 UKP [Bag and Box] [Close up]

- 5x Seagate 18GB 10000rpm U160 LVD/SE 68pin SCSI disk (ST3148406LW): 5 UKP each [Top Label] [68pin Connector]

- "Flame Master" Gas Soldering Iron (Maplin brand, new/unused): 15 UKP [Box]

- Corsair 120mm fan (new), Model CF12S25SH12A, more than ten available: 3 UKP each [Fan in wrapping] [Product Label]

- 64MB RAM kit for SGI Indy (4x 16MB identical SIMMs, gold edge, single-sided low profile): 10 UKP (SEC)

- 64MB RAM kit for SGI Indy (4x 16MB identical SIMMs, gold edge): 7 UKP (LG Semicon)

- 64MB RAM kit for SGI Indy (4x 16MB identical SIMMs, gold edge): 7 UKP (Siemens, MM branded)

- 1x 1GB RAM kit (2x 512MB DIMMs) for SGI Fuel: 15 UKP (Samsung, SGI PN 030-1746-001)

- 7x 1GB RAM kits (2x 512MB DIMMs) for SGI Fuel: 15 UKP per kit (Samsung, SGI PN 030-1044-001)

- External blue SGI SCSI case fitted with a Seagate 18GB 10K: 25 UKP (2x standard 68pin sockets)

- 4x Colour-faded IndyCam Digital Cameras: 5 UKP each

- 5x Disk sled for SGI Challenge/Onyx: 10 UKP each

- Push-button LED lamp with adhesive pad and batteries (new): 2 UKP [Image]

- "Thor: The Dark World" (DVD, used, good condition): 2 UKP [Cover]

- "2010: The Year We Make Contact" (DVD, used, good condition): 2 UKP [Cover]

- "Manchester United vs. SL Benfica, 1968 European Cup Final, 29/May/1968" (DVD, new/sealed): 5 UKP [Cover]

- "Heat" (Steelcase, bluray, used, good condition): 10 UKP [Cover] [Disc]

- "Terminator: Genisys" (Box-cover edition, bluray, new/sealed): 10 UKP [Outer Box Cover] [Inner Sealed Cover]

- "Elaine Page: Celebrating 40 Years on Stage" (DVD, new/sealed): 2 UKP [Cover]

- "Puccini: Madame Butterfly (The Classic Composers Series)" (Audio CD, new/sealed): 5 UKP [Cover]

- Complete 9-book Dragonlance Series (original Trilogy, Legends trilogy, Preludes trilogy): 25 UKP [Image] (ideal for newcomers to fantasy fiction, or younger readers!)

- "Prince of the Blood", by Raymond E. Feist (used): 2 UKP [Cover]

- 2x Centronics/25pin 1m SCSI cable (used): 2 UKP each [Image] (25pin connector is the old style parallel type)

- Centronics/68pin 1m SCSI cable (used): 5 UKP [Image]

- Standard Centronics-type to 25pin older type printer cable: 2 UKP [Image]

- 2-way PS2/VGA KVM unit 2m cabling): 5 UKP [Overall Image] [Unit Closeup] [Product Label]

- Pack of four PS2/USB adapters: 1 UKP [Image]

RamSan 440: The peak of storage tech in 2008, and costing $275000 when new, this unit employs

512GB of DDR2 DRAM to provide 4.5GB/sec sustained I/O and 600,000 IOPS, with an access latency of

less than 15 micro seconds (much quicker than an SSD). It also includes 512GB of RAID-protected

Flash to provide continuous backup (it's actually over 680GB, but about a third is used for

supreme over-provisioning). The unit can be connected via up to eight 4Gbit FibreChannel ports (or

four Infiniband ports), and includes fully redundant N+1 PSUs/fans. Ideal for critical 24/7 data,

databases, low latency transaction data, metadata, etc. Still very potent! Peak power usage is

650W, 4U size for 19" rackmount, max weight 90lbs. Looks like

this.

Here's a detailed PDF,

plus a 2008 article from The

Register, and an article from

Reactive

Data. My thanks to Rob Bone at XSNet for donating this item to help with the build.

Credits List

Here's a summary of all those who've helped in this venture, itemising where the raised

funds have come from, though in some cases a buyer/donator may wish to remain anonymous

or keep the details of what they bought/donated private.

KEY: NYS = Not Yet Sold

DONATION CREDITS:

Amount

Name (with permission) Nekochan ID (if any) Donation Raised (UKP)

Rob Bone at XSNet (USA) - RamSan 440 Storage Unit NYS

Jeb Mayers jebmayers Some older PC GPUs NYS

Michael Pagel thegoldbug Direct. 50

Jonathan Mortimer - Direct. 25

ITEMS SOLD CREDITS (waiting for each buyer to tell me if they'd like to be credited):

Amount

Name (with permission) Nekochan ID (if any) Item(s) Sold Raised (UKP)

Andrew Heath - James May's Toy Stories DVD 5

Andrew Heath - Hitch Hikers 5-book box set 10

Mark Davies uunix 128MB RAM kit for SGI Indy 25

Dirk Twisk twix 3x 64MB RAM kit for SGI Indigo 21

Raphael Vallotton BetXen 128MB RAM kit for SGI Indy 25

Raphael Vallotton BetXen Colour-faded IndyCam 5

Alexander Tafarte xiri 128MB RAM kit for SGI Indy 20

Alexander Tafarte xiri 128MB RAM kit for SGI Indy 17

Frank Everdij dexter1 128MB RAM kit for SGI Indy 15

Frank Everdij dexter1 64MB RAM kit for SGI Indy 5

Alexander Tafarte xiri 64MB RAM kit for SGI Indy 5

Christian Neubert FlasBurn 2x 1GB RAM kit for SGI Fuel 30

One can support LE directly on

Patreon (I signed up; look for me at the end of their newer videos, I'm wearing an

eBid T-shirt), but I decided I wanted to help much more directly. The reason for this is

that I have long believed the field of engineering, along with related sciences & disciplines

such as materials science, is sorely undervalued in the modern world, often pushed aside

by other fields which garner greater publicity and funding, so I couldn't pass up the

chance to help out. After talking at length with Sabin Mathew at LE, I concluded that

even a moderate spend on a careful selection of parts (most used, some new) would produce

a far better system than they have at the moment. Of course it would be great to send

them something totally up to date like an X299 system or even a dual-XEON, but cost-wise

that's not viable.

One can support LE directly on

Patreon (I signed up; look for me at the end of their newer videos, I'm wearing an

eBid T-shirt), but I decided I wanted to help much more directly. The reason for this is

that I have long believed the field of engineering, along with related sciences & disciplines

such as materials science, is sorely undervalued in the modern world, often pushed aside

by other fields which garner greater publicity and funding, so I couldn't pass up the

chance to help out. After talking at length with Sabin Mathew at LE, I concluded that

even a moderate spend on a careful selection of parts (most used, some new) would produce

a far better system than they have at the moment. Of course it would be great to send

them something totally up to date like an X299 system or even a dual-XEON, but cost-wise

that's not viable.

However, after some further research I started thinking about XEON options. It's a bit hard to pin

down precise performance details as most reviews tend to cover dual-socket boards, whereas I was

looking for single-socket data, but then I found this

However, after some further research I started thinking about XEON options. It's a bit hard to pin

down precise performance details as most reviews tend to cover dual-socket boards, whereas I was

looking for single-socket data, but then I found this